Security operations teams face a structural problem that technology alone cannot solve: threats emerge faster than detection systems can identify them. A geopolitical event occurs. A supply chain disruption spreads. A security incident affects an upstream dependency. By the time these events propagate through manual log aggregation and alert triage, response windows have closed.

The underlying challenge is architectural, not technological. Most organizations rely on expensive, inflexible SIEM platforms to aggregate data and detect anomalies. These platforms are designed for breadth (capture everything) rather than depth (detect what matters to your business). Alert fatigue results. Response times measure in hours instead of minutes. Compliance requirements demand audit trails and decision traceability, but proprietary platforms obscure the logic behind alerts.

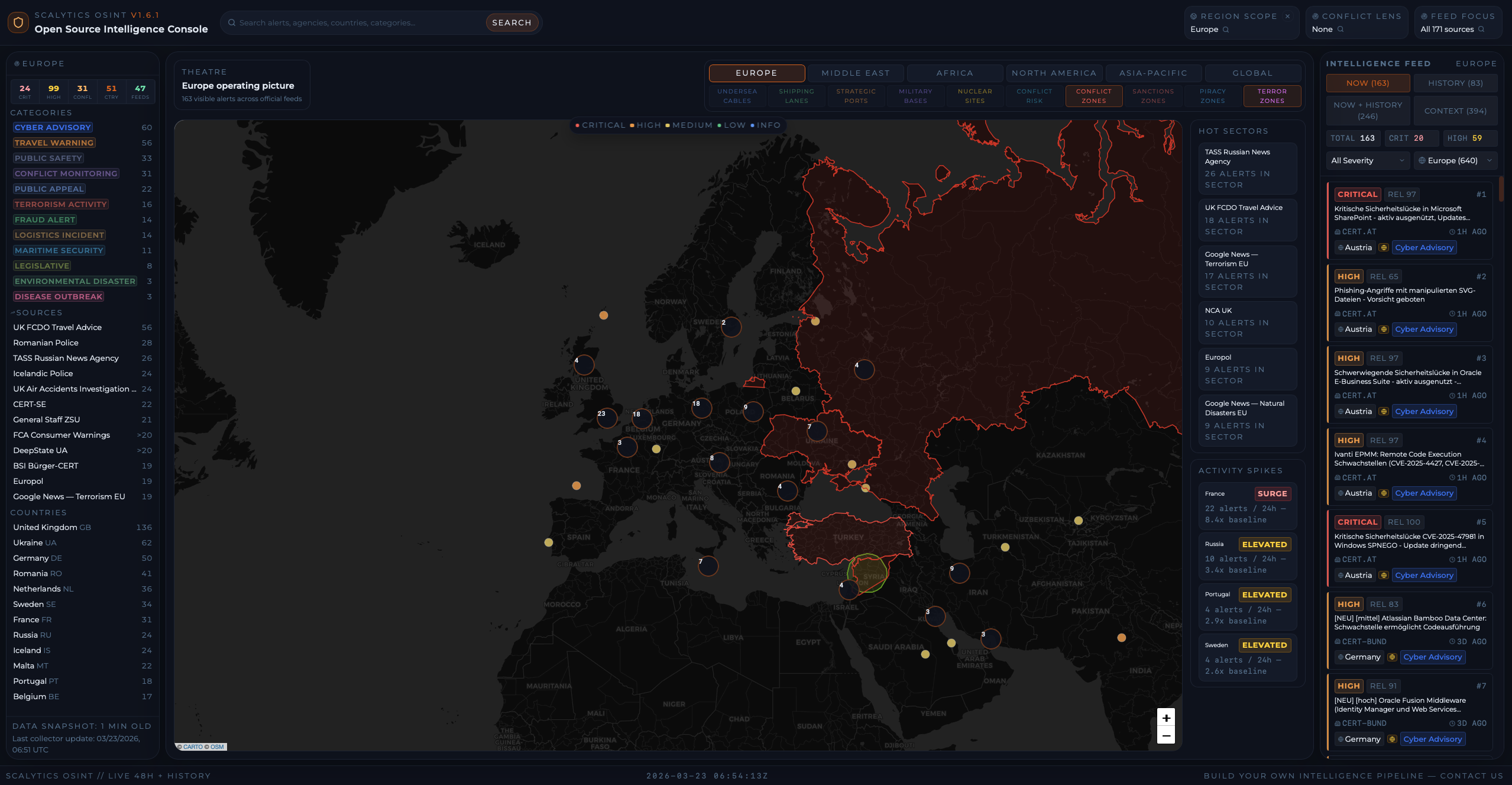

There is a better approach: open-source intelligence gathering paired with production-grade streaming infrastructure. This article outlines EUOSINT, a Scalytics open-source project for building custom, real-time OSINT monitoring systems without vendor lock-in. The foundation is Kafka-based streaming, which handles the durability and scale requirements that real-time threat detection demands.

Bottom Line

Expensive SIEM platforms are inflexible and reactive. Open-source intelligence gathering with Kafka streaming enables real-time threat detection, complete audit trails, and sector-specific customization. Organizations can replace proprietary SIEM platforms with EUOSINT-based systems that detect anomalies in milliseconds, cost 80% less, and provide transparent detection logic.

The Problem: Reactive Intelligence and SIEM Fragmentation

Organizations invest heavily in threat intelligence feeds, security monitoring tools, and alert platforms. Yet operational teams remain reactive. This is not a failure of tools. It is a failure of architecture.

Traditional SIEMs aggregate logs and generate alerts based on predefined rules. A rule fires. An analyst reviews the alert. The analyst investigates baseline behaviors and threat context. By then, 30 minutes have passed. In that window, a threat actor has moved laterally across multiple systems. A competitor has adjusted pricing in response to market signals. A supply chain disruption has cascaded across dependent fulfillment systems.

The second constraint is inflexibility. SIEM rule engines are powerful but opaque. Rules live in proprietary syntax. Changing rules requires vendor support or specialized staff certified in the platform's configuration language. Teams cannot quickly adapt detection logic to sector-specific threats. A financial services firm needs to monitor regulatory announcements and sanctions lists. A software company needs to watch for API deprecations and security advisories in upstream dependencies. A critical infrastructure operator needs to track geopolitical events and weather patterns. All three organizations likely use the same SIEM vendor, but none can efficiently encode their specific intelligence requirements without extensive customization and vendor consulting fees.

The third constraint is economic. Enterprise SIEM licenses cost $500K-$2M annually for mid-market organizations. Scaling to higher data volumes means higher license tiers. The pricing model punishes organizations for collecting more data, which creates an incentive to discard potentially valuable signals. Teams face a choice: limit data collection to minimize licensing costs, or accept ever-increasing expenses as monitoring scope expands.

EU regulatory requirements under DORA (Digital Operational Resilience Act) mandate real-time monitoring and complete audit trails for financial institutions. Compliance requires demonstrable control over detection logic and traceability for every alert. Proprietary SIEMs obscure this control. Custom, open-source systems enable it. Organizations cannot outsource compliance to vendors; they own the operational liability.

Approach: Streaming Intelligence with EUOSINT and Kafka

EUOSINT is an open-source intelligence stack designed to collect, correlate, and detect anomalies across multiple data sources with sub-second latency.

Most open-source OSINT tools stop at collection. EUOSINT does not. It is built to ingest live data, correlate signals across sources, detect anomalies and spikes as they emerge, and support conflict prediction in near real time. This is not a lightweight dashboard on top of a few feeds, and it is not a deploy-in-one-click toy. It is the open-source edition of a system we built through real operational use, shaped by the problems that only show up when you run intelligence workflows continuously. We removed protected integrations and non-public components, but kept the system shape that matters: continuous refresh, operational control, analyst usability, and analysis that turns raw open-source data into usable signal. EUOSINT comes with undersea cable data, shipping lanes, one of the most comprehensive military base datasets, nuclear reactors, terror zones, conflict zones, conflict prediction, forecasting, and more.

Component 1: Flexible Data Collection

Ingest data from multiple sources: RSS feeds, APIs (Twitter, threat intelligence platforms, regulatory sources), internal logs, application events. EUOSINT provides connectors for common sources and a framework for building custom collectors. The key architectural constraint is flexibility. Different organizations monitor entirely different signals.

A financial services firm needs sanctions announcements and regulatory guidance updates. A critical infrastructure operator needs weather and geopolitical event feeds. A SaaS company needs API deprecation notices and upstream vendor security disclosures. A manufacturing company needs supply chain disruption feeds. A generic platform cannot serve all of these without trade-offs.

Example collectors:

- X API: monitor security researchers, threat actors, sector-specific accounts

- Monitoring Telegram Channels

- RSS feeds: news, regulatory announcements, industry-specific advisories

- Public threat intelligence APIs: malware samples, vulnerability feeds, IP reputation

- Internal application logs: errors, authentication failures, performance anomalies

- Custom webhooks: partner notifications, supplier alerts, customer-reported incidents

Component 2: Stream Processing Backbone (Kafka)

All ingested data flows into Kafka topics. Kafka provides three critical properties for real-time threat detection: durability (messages persist for replay and forensic analysis), scalability (partitions enable parallel processing and horizontal scaling), and decoupling (producers and consumers operate independently, enabling architectural flexibility).

Topics organize data by source and signal type:

- raw_tweets

- regulatory_announcements

- threat_intelligence

- application_logs

- supplier_alerts

- geopolitical_events

Kafka's storge durability enables replay: if a detection rule changes, you can reprocess historical data to validate the rule before deploying to production. This is critical for tuning detection sensitivity without disrupting live operations.

Component 3: Real-Time Enrichment

Stream processors consume raw data from Kafka topics and apply enrichment: add geolocation context, normalize timestamps, extract entities (company names, people, threat indicators), correlate across multiple sources. Enrichment is critical because raw signals are often incomplete or inconsistent.

Example enrichment logic:

- A threat intelligence feed mentions a vulnerability using one naming convention (CVE-2024-12345)

- Another feed uses a different reference (GHSA-xxxx-yyyy-zzzz)

- Enrichment normalizes both to a canonical vulnerability identifier and adds CVSS score, affected software, patch status

Component 4: Detection Engine

Rules evaluate enriched signals and trigger alerts when conditions match. Rules are human-readable (not proprietary syntax) and version-controlled in Git:

rule: "anomalous_supplier_disruption"

triggers_on:

- source: "supplier_alerts"

condition: "alert_type == 'delivery_delay' AND duration_hours > 24"

- source: "logistics_api"

condition: "shipment_status == 'delayed' AND count > 50"

correlate_within: "10 minutes"

alert_if: "both_sources_triggered"

severity: "critical"

escalate_to:

- pagerduty_oncall

- slack_supply_chain

Rules are evaluated in real-time with sub-second latency. Because rules live in Git, every rule change has an audit trail. Rolling back a rule is git revert.

Component 5: Alerting and Dashboards

Alerts route to multiple destinations: Slack, PagerDuty, email, webhooks, or custom integration. Dashboards show real-time signal health, alert history, and correlation timelines. Scalytics helps teams design alerting architectures that integrate with existing incident management systems.

Trade-Offs: Operational Complexity vs. Detection Capability

EUOSINT requires upfront investment in rule development and operational discipline if you don't rely on the open ruleset we publish. This is a real trade-off.

SIEM Vendor Approach:

- Operational simplicity: vendor maintains rules; your team reacts

- Detection quality: generic (one-size-fits-all)

- Long-term cost: $500K-$2M annually

- Customization: vendor quotes and consulting projects

EUOSINT Open-Source Approach:

- Operational complexity: your team owns rule development

- Detection quality: high (sector-specific, custom-tuned)

- Long-term cost: infrastructure (Kafka) + team time

- Customization: immediate (change rules anytime)

For organizations with dedicated security teams (5+ people), EUOSINT operational complexity is acceptable. The payoff is substantial: detection sensitivity tuned to your specific business, faster response times, and 80% cost reduction compared to SIEM licensing.

For small organizations (1-2 security staff), SIEM vendor simplicity may be justified. Rule development overhead is too high relative to operational benefit.

Implementation: Five-Week Path to Real-Time Threat Detection

Week 1: Establish Detection Priorities

What are the top threats your organization cares about? For a financial services firm: sanctions violations, regulatory violations, account takeover, market manipulation. For critical infrastructure: supply chain disruptions, geopolitical escalation, weather events. For e-commerce: competitor pricing changes, logistics disruptions, payment processor outages.

Document these threats and the signals that would indicate them. This becomes your rule development roadmap.

Week 2: Deploy Kafka Infrastructure

Set up a production Kafka cluster or managed service (Confluent Cloud, Aiven) or use KafScale as a perfect multiplier with S3 storage and intelligent processor architecture. Configure topics for your data sources. Set retention policies (7 days for debugging, 90 days for compliance replay).

Week 3: Integrate Data Sources

Deploy collectors for your top internal data sources. Start with API sources (ERP, SAP, Salesforce, threat intelligence APIs) before adding databases or observation logs. Validate data is flowing into the correct Kafka topics. Inspect messages to understand schema and ensure consistency.

Week 4: Develop and Test Detection Rules

Write rules for your own threat detection engine based on your internal needs. Use historical data (replay from Kafka) to validate rule logic before production deployment. Measure false positive rate (sensitivity tuning is critical). Rules that fire constantly are useless; rules that miss real threats are worse.

Example rule development cycle:

- Write rule in JSON

- Git commit for version control

- Replay historical data against rule

- Measure: true positives, false positives, detection latency

- Adjust rule if false positive rate is too high

- Deploy to production when satisfied

Week 5: Establish Operational Procedures

Document:

- How to add a new data source (update collector config, add Kafka topic)

- How to develop and deploy a new rule (write JSON, test, git commit, merge triggers deployment)

- How to handle alert storms (disable rule, investigate false positives, iterate)

- How to replay events (use Kafka offset reset for forensic analysis)

Schedule a consultation with Scalytics to establish these operational procedures and validate your rule development approach.

Next Steps

Open-source intelligence gathering is no longer the domain of security researchers and nation-states. Organizations can now build custom, real-time threat detection systems that outperform expensive vendor SIEM platforms in speed, flexibility, and cost.

Immediate action: Audit your current threat detection strategy.

Document:

- What data sources do you currently monitor (logs, feeds, APIs)?

- How long does it take from threat emergence to alert (minutes, hours)?

- How many alerts does your SIEM generate daily? What percentage are actionable?

- What sector-specific threats are you most concerned about?

- What is your current annual SIEM spend?

If you have more than 50% false positive alerts, response times exceeding 30 minutes, or annual SIEM spend exceeding $300K, EUOSINT is justified.

Explore EUOSINT on GitHub and deploy a test cluster in your environment. Kafka streaming for threat detection is operationally accessible; most organizations can deploy a working detection pipeline in 4-6 weeks.

Schedule an architecture review with Scalytics to assess your current threat detection landscape and design a custom EUOSINT implementation. We help organizations replace expensive SIEM platforms with open-source intelligence stacks that detect anomalies in milliseconds, provide complete audit trails, and enable sector-specific threat hunting.

Real-time threat detection is a competitive advantage. Organizations that move from reactive SIEM alerts to proactive, streaming-based intelligence will detect threats 10x faster than competitors still waiting for vendor-generated alerts.

About Scalytics

Our founding team created Apache Wayang (now an Apache Top-Level Project), the federated execution framework that orchestrates Spark, Flink, and TensorFlow where data lives and reduces ETL movement overhead.

We also invented and actively maintain KafScale (S3-Kafka-streaming platform), a Kafka-compatible, stateless data and large object streaming system designed for Kubernetes and object storage backends. Elastic compute. No broker babysitting. No lock-in.

Our mission: Data stays in place. Compute comes to you. From data lakehousese to private AI deployment and distributed ML - all designed for security, compliance, and production resilience.

Questions? Join our open Slack community or schedule a consult.